AI Automation vs AI Agents in 2026: A Practical Decision Matrix for Enterprise Buyers

Most teams asking whether they need “AI agents” are really asking a messier question: do we need autonomy, or do we just need the workflow to stop sucking?

That distinction matters because the market is now full of expensive confusion. Vendors slap the word agent on anything with a prompt box. Internal teams over-spec the solution because autonomy sounds more strategic than automation. Leadership gets sold on a futuristic operating model when the actual business problem is painfully ordinary: too much manual triage, too many handoffs, too much swivel-chair work, too little visibility into what the hell is happening between intake and outcome.

So let’s cut through it.

In most enterprises, AI automation and AI agents are not interchangeable. They solve different classes of problems, carry different cost structures, and fail in different ways. Choosing the wrong one is not a technical nuisance. It is a budget leak. It slows implementation, creates governance drag, and makes ROI harder to prove.

The numbers are already warning people. Gartner predicted that at least 30% of generative AI projects would be abandoned after proof of concept by the end of 2025 because of poor data quality, weak risk controls, rising cost, or unclear business value. McKinsey’s 2025 State of AI research found 78% of organizations use AI in at least one business function, yet fewer than one-third follow most of the practices linked with real bottom-line impact. Bain’s enterprise survey added an even sharper point: while roughly 80% of executives said generative AI initiatives met or exceeded expectations, only 23% could tie them to measurable revenue gains or cost reductions.

That gap exists because too many companies start with the technology label instead of the operating problem.

This article gives you a buyer-side decision matrix for 2026. Not hype. Not theory. A practical way to decide whether you need rule-driven automation, semi-autonomous AI workflows, or full agentic behavior for a specific use case. If you own operations, revenue, support, compliance, knowledge work, or enterprise transformation, this is the call you need to make before vendors make it for you.

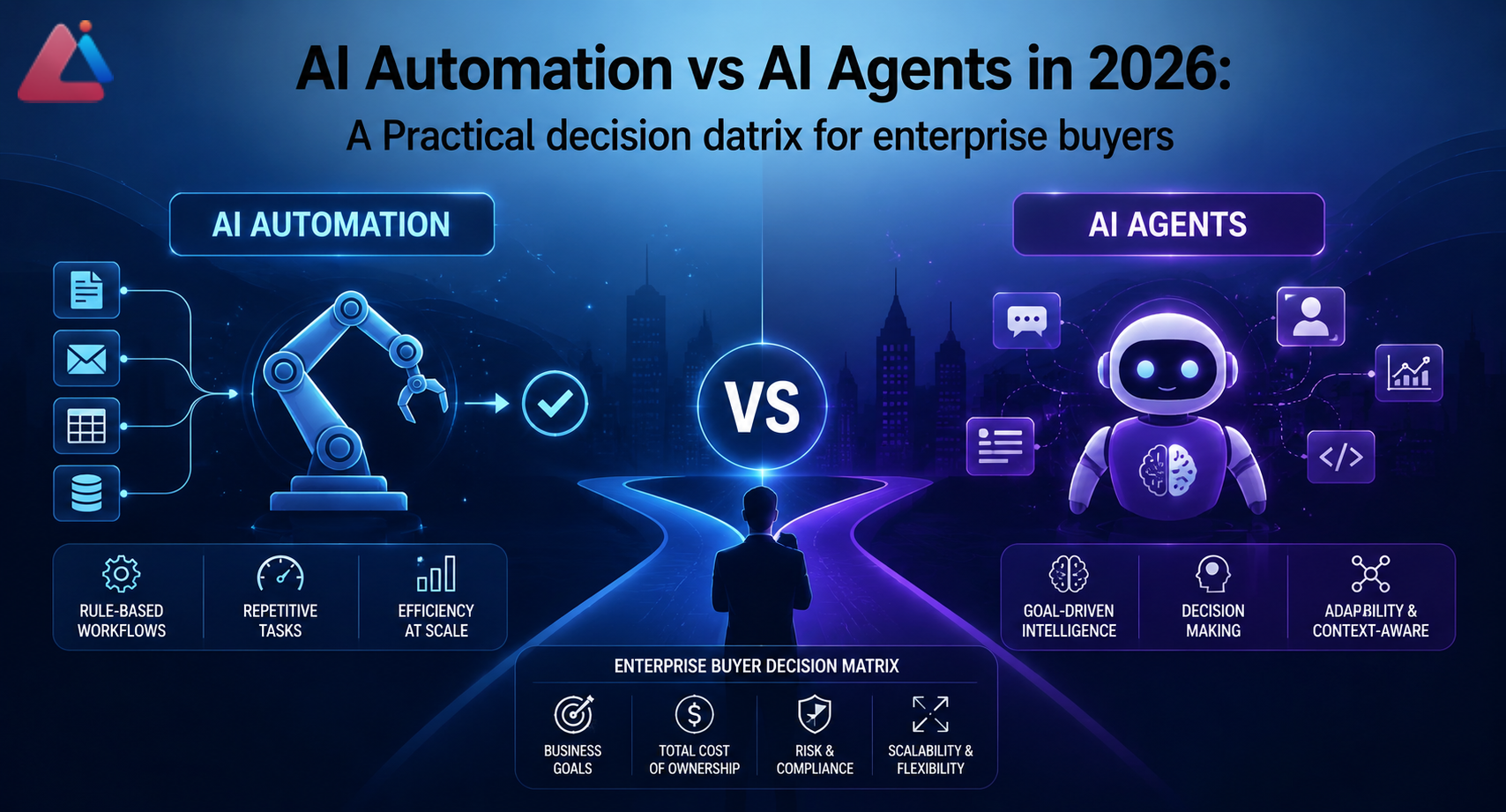

1. Start with the blunt definition: what is AI automation, and what is an AI agent?

AI automation is the broader and usually safer category. It means using AI inside a workflow that is still structurally controlled by predefined steps, triggers, approvals, and system boundaries. The model can classify, summarize, extract, draft, route, score, or recommend—but the flow itself is orchestrated by deterministic logic.

Think invoice extraction, lead routing, call summarization, support ticket categorization, contract redlining assistance, knowledge retrieval with human approval, or CRM data enrichment with confidence thresholds. The workflow may be smart, but it is still governed.

AI agents are different. An agent is designed to pursue a goal with some degree of autonomy. It can decide what steps to take, choose tools, iterate, retrieve context, plan sub-tasks, and adapt based on intermediate results. In practice, enterprise agents often sit on top of LLMs, tool-calling, retrieval, memory, and workflow orchestration.

The clean way to frame the difference:

– Automation = “Here is the flow. AI improves one or more steps.”

– Agent = “Here is the goal. AI helps decide the flow.”

That does not mean agents are always better. Usually the opposite. Most business processes do not need open-ended autonomy. They need narrower intelligence inside a controlled process.

IBM’s enterprise framing around agentic AI and workflow automation makes this point indirectly: the value appears when AI can reason over tasks and act across tools, but enterprise viability still depends on guardrails, observability, and workflow fit. AWS makes a similar distinction in its prescriptive guidance for RAG and LLM systems: the more dynamic the system behavior, the more architecture, evaluation, and governance discipline you need.

If the work has stable inputs, repeatable rules, clear exception handling, and measurable output, automation is usually the better first choice. If the work is variable, multi-step, tool-heavy, and impossible to fully pre-map without losing most of the value, an agent may be worth the overhead.

2. The 2026 decision matrix: when to choose automation vs agents

Here is the simple buyer rule: choose the least autonomous system that can still produce the business outcome.

That is the highest-ROI default because autonomy adds cost, variance, monitoring burden, and risk.

Choose AI automation when:

- The process already exists and mostly works, but is too slow, manual, or inconsistent.

- Inputs are semi-structured but familiar: emails, forms, tickets, call notes, PDFs, CRM records.

- The business wants predictable output and auditability.

- Exceptions can be routed to a human queue.

- You need a 30- to 90-day ROI path, not a six-month experimentation loop.

- Compliance, security, or brand risk is non-trivial.

Choose AI agents when:

- The task is goal-based rather than step-based.

- Users currently bounce across multiple systems to gather context, decide next actions, and execute them.

- The environment changes frequently, and fixed logic becomes brittle fast.

- The value comes from dynamic planning, prioritization, or tool use.

- A human remains in the loop for review, escalation, or approval.

- The organization can support evaluation, tracing, and policy controls.

Avoid both and redesign first when:

- The underlying process is broken or politically contested.

- Source data is garbage.

- Owners disagree on success metrics.

- Nobody can define the failure threshold.

- Leadership wants “AI” as theater instead of process improvement.

That last bucket is more common than vendors admit.

3. Cost reality: agents are usually more expensive than automation, and not just in model usage

Buyers often compare cost at the API level. That is rookie math.

The real cost stack includes architecture, integration, prompt and evaluation work, exception handling, governance, monitoring, user training, security review, and ongoing tuning. Gartner noted enterprise generative AI deployments can range from $5 million to $20 million, depending on ambition and implementation path. That does not mean your project should cost that much. It does mean sloppy assumptions get expensive fast.

A practical cost comparison looks like this:

AI automation cost profile

- Lower orchestration complexity

- Easier integration into existing BPM/RPA/CRM/helpdesk stacks

- Simpler testing because outputs are narrower

- Lower latency and token usage in many cases

- Fewer edge-case branches to monitor

- Faster time to pilot and scale

AI agent cost profile

- More tool wiring and permission design

- More evaluation work across sequences, not just single outputs

- Higher variance in behavior and therefore more QA overhead

- More tracing and observability requirements

- Bigger security and access questions

- More human review design if the agent can take impactful actions

In plain English: automation is usually cheaper to ship, cheaper to govern, and cheaper to trust.

That does not make agents bad investments. It makes them selective investments.

If one agent can collapse five browser-based support tasks, cut resolution time by 35%, improve first-response quality, and prevent backlog growth without adding headcount, the higher complexity may be justified. But if you are using an agent to summarize documents and push a field update into a CRM, you are probably lighting money on fire for no reason.

4. Time-to-value: automation usually wins the first 90 days

Enterprise buyers should care less about what demos well and more about what survives implementation.

Automation has a major advantage here: you can scope it to a narrow process, define the input and output shape, layer in confidence thresholds, and deploy human review where needed. That makes it much more likely to show measurable value within one quarter.

McKinsey’s research keeps reinforcing a boring but important point: companies that scale AI well are not the ones with the flashiest pilots. They are the ones that tie deployment to workflow redesign, adoption, operating discipline, and measurable business outcomes.

A realistic timeline comparison:

Typical AI automation timeline

- Weeks 1–2: process mapping, KPI baseline, data sampling

- Weeks 3–5: prototype on real documents/tickets/records

- Weeks 6–8: workflow integration and human review layer

- Weeks 9–12: limited rollout, QA, accuracy tuning, dashboarding

Typical AI agent timeline

- Weeks 1–3: use-case decomposition, tool access design, policy constraints

- Weeks 4–6: reasoning flow design, tool-calling, retrieval, memory decisions

- Weeks 7–10: evaluation framework, failure case testing, intervention policies

- Weeks 11–16+: pilot rollout, tracing, trust calibration, escalation design

Can agents move faster than that? Sure. Can they also implode because the team skipped evaluation and gave the model too much freedom? Absolutely.

If the business wants visible ROI this quarter, automation is the safer bet nine times out of ten.

5. Risk and governance: the autonomy tax is real

The more freedom a system has, the more governance you need. This is not anti-agent bias. It is basic operational reality.

NIST’s AI Risk Management Framework exists for a reason. As systems become harder to predict, organizations need stronger controls around validity, accountability, transparency, privacy, and ongoing monitoring. Deloitte’s enterprise AI research also keeps landing on the same issue: enthusiasm is high, but operational trust, governance, and risk maturity lag behind adoption.

Here is where automation has the edge:

– Easier audit trails

– Tighter scope boundaries

– More reliable exception handling

– Cleaner role design for human approval

– Lower blast radius when outputs are wrong

Here is where agents get tricky:

– They may choose an unexpected path even if the end goal is reasonable.

– Retrieval quality can distort planning quality.

– Tool permissions can quietly create security exposure.

– Errors compound across steps.

– Non-determinism makes failure analysis harder.

If the use case touches customer communication, pricing, compliance, claims, legal content, identity, payments, or production infrastructure, do not hand-wave governance. That is how teams create a sexy demo and a miserable postmortem.

6. Use-case fit: where automation dominates, and where agents actually earn their keep

This is where buyers need a spine. Not every knowledge workflow needs an agent.

Strong fit for AI automation

- Support triage and routing

Classify tickets, extract intent, suggest replies, route by urgency, attach relevant knowledge, and escalate edge cases. - Sales ops and CRM hygiene

Summarize calls, detect next steps, score lead quality, enrich accounts, identify missing fields, and create follow-up tasks. - Finance document workflows

Extract invoice data, flag anomalies, reconcile records, summarize exceptions, and feed approval queues. - Procurement and vendor intake

Standardize intake forms, summarize vendor responses, route by risk tier, and prepare due diligence packs. - Internal knowledge retrieval with review

Pull policy answers or SOP snippets from trusted sources, then let a human validate before broad use.

Strong fit for AI agents

- Multi-system service resolution

Gather account context, inspect prior issues, check entitlements, draft action paths, execute allowed updates, and prepare a human-ready resolution summary. - Complex revenue operations orchestration

Review inbound accounts, pull firmographic signals, inspect CRM history, draft outreach strategy, trigger enrichment tools, and prepare account-specific next actions. - Operational command centers

Monitor multiple data sources, detect threshold breaches, investigate contributing signals, propose responses, and open sub-tasks for teams. - Research-heavy internal copilots

Retrieve from multiple knowledge systems, compare options, build a recommendation path, and cite evidence across tools. - Enterprise task chains with branching logic

Any workflow where the next best step depends on fresh context, not a static rule tree.

The test is simple: if a static flow captures 80% of the value, do not overbuild with agents.

7. Field reality: what actually fails in real projects

Here is the part people skip in strategy decks.

Most AI projects do not fail because the model is dumb. They fail because the operating environment is messy.

In the field, the common failure pattern looks like this:

– The team chooses an agent because leadership wants something “more advanced.”

– Nobody agrees on what autonomy is actually required.

– Tool access gets bolted on before policy boundaries are clear.

– Evaluation focuses on happy-path demos.

– Exception queues are undefined.

– Frontline users do not trust the output, so they recreate the work manually.

– Six weeks later, the project technically works and commercially underperforms.

That is not an AI problem. That is bad operating design wearing an AI costume.

A narrower automation approach often wins because it is easier to trust, easier to debug, and easier to improve. Teams can see where the model helps, where humans intervene, and where error rates spike. That visibility compounds. Agent projects can absolutely work, but only when the organization is honest about complexity and willing to invest in controls.

If your team cannot yet run a disciplined automation program, jumping straight to agents is usually ego, not strategy.

8. A practical enterprise decision matrix you can use this week

Use the following matrix before signing anything or greenlighting a build.

Decision dimensions

1. Process stability

– Stable process with known steps → Automation

– Fluid process with changing paths → Agent

2. Variability of inputs

– Repeating input patterns → Automation

– Wide input diversity requiring interpretation across tools → Agent

3. Consequence of errors

– High-risk domain needing strong control → Automation first

– Moderate-risk domain with review gates → Agent may fit

4. Need for tool selection/planning

– Fixed system actions → Automation

– Dynamic choice of tools/actions → Agent

5. Speed to ROI required

– 30–90 day value window → Automation

– Longer strategic build accepted → Agent

6. Governance maturity

– Weak evaluation and policy infrastructure → Automation

– Strong logging, review, policy, and model ops discipline → Agent possible

7. Human review design

– Human approval is core to the workflow → Automation-friendly

– Human acts as supervisor for goal completion → Agent-friendly

8. Change frequency in the environment

– Low change, predictable exceptions → Automation

– High change, brittle static rules → Agent

A good buyer move is to score each dimension from 1 to 5 and force a decision memo before any build starts. If most of the answers lean toward control, repeatability, and auditability, choose automation. If they lean toward dynamic planning and multi-step execution, pilot an agent with tight guardrails.

9. Benchmarks that matter more than hype metrics

Stop evaluating these systems on demo impressiveness. Evaluate them like operating assets.

For AI automation, track:

- Cycle time reduction

- Straight-through processing rate

- Human touch time saved

- Exception rate

- Accuracy/precision on critical fields

- SLA improvement

- Cost per completed task

For AI agents, track:

- Goal completion rate

- Human intervention rate

- Average steps per successful task

- Tool execution success rate

- Recovery rate after failure

- Escalation quality

- Business outcome per session or per workflow

Stanford’s AI Index keeps showing broad adoption, but adoption is not the finish line. The real metric is durable business performance under real-world conditions.

If your dashboard cannot connect the AI layer to revenue, margin, speed, or capacity, you are measuring theater.

10. Recommended rollout pattern: automate first, agent second

This is my strongest opinion in the whole piece: most enterprises should earn the right to deploy agents by first getting good at AI automation.

Why? Because the discipline transfers.

When you build automation properly, you create:

– better process maps

– cleaner baseline metrics

– sharper exception handling

– stronger prompt and evaluation habits

– clearer data contracts

– more realistic user adoption patterns

Then, when you introduce agents, you are doing it on top of a controlled operating foundation instead of a swamp.

A sane rollout sequence in 2026 looks like this:

1. Pick one high-volume workflow with measurable pain.

2. Add AI to extraction, routing, summarization, scoring, or recommendation.

3. Prove value with human oversight.

4. Identify where static flow logic breaks down.

5. Introduce agentic behavior only in the parts where dynamic planning materially improves outcomes.

6. Keep approvals, logging, and rollback paths from day one.

That sequencing keeps the business outcome in charge. Which is how it should be.

11. Buyer questions to ask vendors before you commit

If a vendor says they offer “AI agents,” ask these questions and do not let them wriggle out with marketing fluff:

- What percentage of the workflow is deterministic versus model-driven?

- How do you trace decisions across multi-step actions?

- What are the hard permission boundaries for tools and data?

- How do you evaluate failure recovery, not just success cases?

- What happens when confidence is low or context is incomplete?

- How is human approval inserted, and at which risk thresholds?

- What metrics do your best customers use to prove ROI?

- What implementation dependencies usually slow deployment?

- How do you handle prompt/version drift over time?

- Can this use case be delivered as structured automation first?

If they hate the last question, that is a tell.

FAQ

What is the main difference between AI automation and AI agents?

AI automation uses AI inside a predefined workflow, while AI agents have more autonomy to decide steps, choose tools, and pursue a goal dynamically.

Are AI agents better than workflow automation?

Not by default. Agents are better only when the use case truly needs dynamic planning, multi-step reasoning, and changing tool use. For many enterprise workflows, automation is cheaper, safer, and faster to scale.

Which has faster ROI in enterprise settings?

AI automation usually reaches measurable ROI faster because implementation is narrower, easier to test, and easier to govern.

When should an enterprise choose AI agents?

Choose agents when the task is goal-based, spans multiple systems, changes frequently, and loses value if you try to hardcode every step.

Are AI agents riskier than automation?

Yes. More autonomy usually means higher governance, observability, security, and review requirements.

Can companies use both?

They should. The smart pattern is to automate controlled parts of a workflow first, then layer in agentic behavior where dynamic decision-making actually improves outcomes.

Conclusion

The wrong way to buy enterprise AI in 2026 is to ask, “Do we need agents?”

The right question is, “What level of autonomy is justified by the economics, risk, and workflow complexity of this problem?”

That question leads to better architecture, better budgeting, faster implementation, and less bullshit.

For most teams, AI automation should be the default. It is easier to trust, easier to govern, and more likely to show ROI in the first 90 days. AI agents matter, but they should be deployed where dynamic planning is genuinely required—not where the label sounds more strategic in a boardroom.

If you make that call with discipline, you stop buying AI as hype and start deploying it as an operating advantage.

AINinza is powered by Aeologic Technologies, which helps enterprises design practical AI automation, agentic workflows, and implementation roadmaps tied to business outcomes. If you want a grounded plan instead of another inflated AI pitch, start here: https://aeologic.com/

References

- Gartner, “Gartner Predicts 30% of Generative AI Projects Will Be Abandoned After Proof of Concept by End of 2025” — https://www.gartner.com/en/newsroom/press-releases/2024-07-29-gartner-predicts-30-percent-of-generative-ai-projects-will-be-abandoned-after-proof-of-concept-by-end-of-2025

- McKinsey, “The State of AI: Global Survey 2025” — https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- Bain & Company, “Generative AI Virtually Ubiquitous in Global Business” — https://www.bain.com/about/media-center/press-releases/2024/generative-ai-virtually-ubiquitous-in-global-business-as-the-technology-spreads-at-a-near-unprecedented-rate–bain–company-proprietary-survey/

- NIST, “AI Risk Management Framework” — https://www.nist.gov/itl/ai-risk-management-framework

- AWS Prescriptive Guidance, “Retrieval Augmented Generation options and architectures” — https://docs.aws.amazon.com/prescriptive-guidance/latest/retrieval-augmented-generation-options/introduction.html

- Deloitte, “State of Generative AI in the Enterprise” — https://www.deloitte.com/az/en/issues/generative-ai/state-of-generative-ai-in-enterprise.html

- Stanford HAI, “2025 AI Index Report” — https://hai.stanford.edu/ai-index/2025-ai-index-report

- IBM, “What are AI agents?” — https://www.ibm.com/think/topics/ai-agents

- Microsoft, “2025 Work Trend Index” — https://www.microsoft.com/en-us/worklab/work-trend-index