Human-in-the-Loop AI: Architecture Patterns for Enterprise Reliability

You’ve built the AI system. It works in the lab. Now you’re rolling it out to production, and suddenly you realize: the model sometimes makes decisions that need a human eye before they hit the real world.

This isn’t a flaw in your AI—it’s a design choice you didn’t explicitly make yet. And if you don’t make it intentionally, your system will either bottleneck humans with too much review work, or ship dangerous decisions because you tried to remove humans entirely.

Human-in-the-Loop (HITL) systems sit in that uncomfortable middle ground. They’re more complex than pure automation. They’re slower than fully autonomous AI. But they’re also the only reliable way to scale AI in regulated industries, high-stakes domains, and teams that aren’t ready to bet their entire business on a black box.

This guide walks you through 5 battle-tested HITL architecture patterns. You’ll learn when to use each one, how to avoid the common bottlenecks that kill HITL systems, and how to structure your feedback loops so your model actually gets smarter from human input.

The HITL Reliability Problem

Enterprise AI projects fail in predictable ways. The AI works. But somewhere between “algorithm accuracy in testing” and “consistent results in production,” things break.

Here’s the pattern we see:

- Day 1–30: Team automates 100% of decisions. Fast, impressive metrics.

- Day 31–60: Edge cases appear. The model makes a bad call every 50 decisions. One bad call costs $10K or breaks a client relationship.

- Day 61+: Team adds manual review. System slows down 80%. Humans become the bottleneck, not the AI.

This is where most enterprise AI projects live: trapped between “not safe to automate” and “too expensive to review everything manually.”

HITL architecture solves this, but only if you design it right. The key is understanding that HITL is not a single pattern—it’s a spectrum of strategies for deciding which decisions humans see, when they see them, and how their feedback actually improves the system.

Pattern 1: High-Confidence Passthrough (Selective Review)

When to use: You trust your model most of the time, but want safety rails for edge cases.

The idea: Route only decisions below a confidence threshold to humans. Everything else goes straight to production.

Input → Model → Confidence Score

↓

Is confidence > 0.95?

├─ YES → Auto-execute

└─ NO → Send to human queue

Real-world example: A document classification system. If the model is 98% confident a contract is a service agreement, execute the routing rule. If confidence drops to 72%, a lawyer reviews it.

Why this works: You get speed on routine cases and safety on ambiguous ones. The system learns which types of inputs are hard.

The bottleneck to watch: Your confidence threshold is a dial, not a wall. If you set it too conservative, everything goes to humans. Too aggressive, and bad decisions slip through. You need to calibrate this weekly based on false-positive costs.

Feedback loop: When a human overrides a decision, that case becomes a training example. But only if you actually capture it, version it, and retrain regularly. Most teams capture the data and never look at it again.

References:

– Horvitz et al. (2005) on confidence-based routing in human-AI teams: https://www.aaai.org/Papers/ICML/2003/ICML03-018.pdf

– Microsoft Research on selective prediction: https://arxiv.org/abs/1904.12627

Pattern 2: Stratified Review (Risk-Based Routing)

When to use: Different decisions have different costs. You can’t treat all human reviews the same.

The idea: Route decisions to humans based on business impact, not just ML confidence.

Input → Model → Prediction + Confidence

↓

Calculate business risk:

• Impact if wrong (cost/damage)

• Confidence score

• Historical error rate for this input type

↓

Risk Score = Impact × (1 - Confidence)

↓

Route to appropriate human tier

or auto-execute if risk < threshold

Real-world example: A hiring AI that screens resumes. Rejecting a candidate is low-cost (you find another). Accepting a bad candidate is high-cost (bad hire, turnover, retraining). So you auto-reject obvious cases, but flag 30% of candidates for human screening.

Another example: a fraud detection system. A $5K transaction that looks suspicious → junior analyst. A $500K transaction with moderate suspicious signals → senior analyst. Clear fraud → auto-block.

Why this works: You’re not treating all decisions equally. You’re being rational about where humans add the most value.

The bottleneck to watch: You’ll need different people for different risk tiers. If everything goes to your best analyst, you haven’t scaled. Create clear playbooks for junior reviewers so they can handle routine cases without escalation.

Feedback loop: High-risk decisions are your richest training data. When a senior reviewer overrides the model, that’s a signal that the model missed something important in that decision category.

References:

– Rogova & Nimier (2004) on cost-sensitive decision making: https://www.intechopen.com/chapters/17995

– Amershi et al. (2019) on designing AI systems with humans in the loop: https://doi.org/10.1145/3290605.3300233

Pattern 3: Batch Review with Aggregate Feedback

When to use: Individual decisions are low-stakes, but volume is high. Humans can’t review each one.

The idea: Run AI on a batch. Sample 5–10% for human review. Use that sample to estimate system-wide accuracy and retrain on corrected cases.

1000 Decisions

↓

AI processes all 1000

↓

Randomly sample 50 for human review

↓

Calculate actual error rate from sample

↓

If error > acceptable threshold:

├─ Retrain model

└─ Route previous uncertain cases to humans

Real-world example: An email spam filter processes 100K emails/day. You can’t review all of them. But you sample 100 and ask users which were misclassified. That 0.1% sample gives you real accuracy data and training signals.

Why this works: Statistically sound. You get real error estimates. Humans review strategically, not reactively.

The bottleneck to watch: Humans get bored reviewing samples. The review becomes perfunctory. Make the sampling task specific (“flag emails that are marketing spam vs. legitimate product updates”) not vague (“tell us if this email was right”).

Feedback loop: This pattern assumes your humans are reliable annotators. If two people disagree on 20% of samples, your retraining signal is noisy. You need consistent labeling rules and maybe multiple reviewers for edge cases.

References:

– Settles (2009) on active learning and selective sampling: https://www.cs.cmu.edu/~bsettles/active-learning/

– Rekatsinas et al. (2017) on data cleaning at scale: https://arxiv.org/abs/1706.06368

Pattern 4: Progressive Autonomy (Earned Automation)

When to use: You’re scaling from high review to eventual full automation. You want to reduce human involvement gradually as confidence grows.

The idea: Start with 100% human review. As the model proves itself on specific decision types, reduce review % for those types. Track “graduated” decision categories separately.

Day 1: All decisions → Human review

↓

Track model performance by decision type

↓

Type A (contracts) achieved 99.5% accuracy over 500 cases

├─ Reduce review to 10% for Type A

└─ Continue 100% review for Type B, C

↓

Type B achieved 99% accuracy over 1000 cases

├─ Reduce review to 5% for Type B

└─ Continue 100% review for Type C

↓

Once a type reaches 2000+ cases with <0.5% error:

├─ Full auto-execute (no review)

└─ Spot-check: 0.1% sample monthly

Real-world example: A supply chain optimization AI. Month 1: humans approve all procurement decisions. Month 2: high-accuracy categories (routine replenishment) go to 50% review. Month 3: those categories graduate to 5% spot-check. Low-accuracy categories (new supplier qualification) stay at 100% review for 6 months.

Why this works: Psychological safety. Teams trust the system because they watched it earn it. You reduce human review burden gradually, not catastrophically.

The bottleneck to watch: You’ll develop “review decay.” After 6 months of automation, reviewers get rusty. When an anomaly shows up, they might miss it. Periodic retraining and refresher reviews prevent this.

Feedback loop: This pattern requires meticulous tracking. Which decision types were reviewed? Which are now automated? What’s the accuracy on each? If you can’t answer these questions, you can’t scale progressively.

References:

– Bansal et al. (2019) on appropriate reliance and overtrust: https://doi.org/10.1145/3290605.3300234

– Ghai et al. (2021) on human-AI collaboration and skill decay: https://arxiv.org/abs/2108.09889

Pattern 5: Feedback Loop Integration (Active Learning at Scale)

When to use: You have a way to collect human corrections at scale and want the model to improve continuously from real-world feedback.

The idea: Structure HITL as an active learning system. Humans don’t just review—they generate training data. Prioritize which cases to show them based on model uncertainty and disagreement.

Production decisions come in

↓

Model makes prediction + confidence

↓

Decide: route to human or auto-execute?

↓

If human reviews it:

├─ Capture the correction

├─ Add to retraining queue (labeled)

└─ Use disagreement signal to find edge cases

↓

Weekly: Retrain on corrections + disagreements

↓

Model improves → confidence increases → fewer reviews needed

Real-world example: A customer support ticket router. Model routes tickets to the right team. When a support agent corrects the routing, that’s a training signal. After 1000 corrections, retrain. The model learns that certain keywords or ticket structures belong in specific teams.

Another example: a loan underwriting system. When an underwriter approves a loan the model flagged, or flags a loan the model approved, that’s valuable signal. Collect these, retrain monthly. The model gradually learns the subtle patterns underwriters use.

Why this works: Your most expensive resource (human judgment) becomes your best training data. The system improves continuously from real decisions, not historical static data.

The bottleneck to watch: Feedback data quality. If corrections are inconsistent (different reviewers label the same edge case differently), retraining makes the model worse, not better. You need explicit labeling standards and maybe disagreement-resolution protocols.

Data feedback loop: This is tricky. If you use only human-corrected cases, you bias the model toward correction data, which is overweighted on edge cases and errors. You need to:

– Balance corrected cases with auto-executed cases.

– Periodically retrain on all data, not just corrections.

– Monitor for distribution shift (if your correction patterns change, the model might be drifting).

References:

– Fails & Demiröz (2005) on active learning and human-in-the-loop: https://www.cs.cmu.edu/~bsettles/active-learning/

– Amershi et al. (2018) on teachable AI systems: https://arxiv.org/abs/1707.06624

– He et al. (2016) on imbalanced learning and feedback: https://arxiv.org/abs/1609.02211

Field Reality: Why HITL Systems Fail in Real Teams

Theory says HITL is the balanced, safe approach. In practice, here’s what actually breaks:

The review bottleneck: You build a perfect HITL system. Humans review low-confidence cases. But “low-confidence” turns out to be 30% of production decisions. One human can’t keep up. You either hire more reviewers (expensive) or let the queue back up (customers get delays). Most teams choose to lower the confidence threshold (more automation) and hope the bad cases don’t show up.

Inconsistent reviewers: Person A flags a decision as wrong. Person B, reviewing the same type of decision, approves it. Your model gets conflicting signals. Retraining on this data teaches the model to be uncertain, which sends more cases back to humans, which creates the bottleneck again.

Feedback loops that don’t actually train: You capture corrections. They sit in a database for six months. Then someone says “we should retrain,” but nobody’s responsible for actually doing it. The model gets stale. Humans start overriding it constantly because it’s learned nothing.

Skill decay: Your domain experts reviewed every decision for 6 months. Then you automated 80% of cases for 6 more months. Now they’re rusty. A subtle edge case comes in. They miss it because they haven’t seen that type of decision in 6 months.

Cost creep: You didn’t track the cost of human review. Turns out your HITL system is more expensive than full automation would be, but you can’t go back because you’ve already automated away the expertise. Now you’re stuck with expensive humans and automated systems that don’t cooperate.

The fix: Pick one person to own the review SLA and feedback loop. Make it somebody’s job (not somebody’s side project) to:

– Monitor review queue depth.

– Retrain on corrections monthly.

– Track human agreement rates.

– Spot skill decay and refresh reviewers.

This person is expensive. They’re also not optional if you want HITL to actually work.

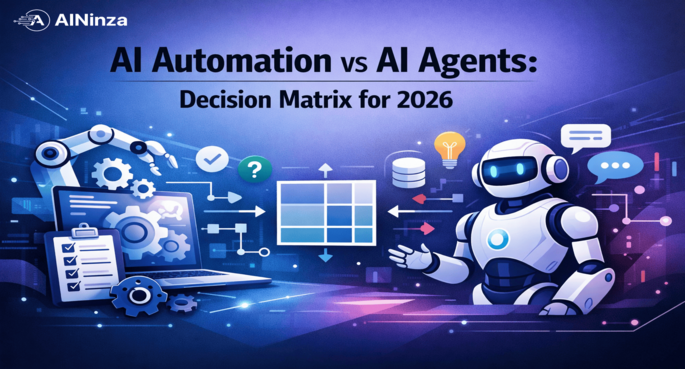

Choosing Your Pattern: A Decision Framework

| Pattern | Use When | Bottleneck Risk | Team Size |

|---|---|---|---|

| High-Confidence Passthrough | You trust your model 90%+ of the time | Threshold calibration drifts | 2–5 reviewers |

| Stratified Review | Different decisions have wildly different costs | Junior reviewers miss nuance | 5–15 reviewers |

| Batch Review | Volume is massive, individual stakes are low | Review becomes rote; quality drops | 1–3 reviewers |

| Progressive Autonomy | You’re scaling from zero to full automation | Skill decay as automation grows | 5–10 reviewers |

| Feedback Loop Integration | You have fast retraining cycles and clear correction signals | Inconsistent feedback data quality | 10–50 reviewers |

Most teams use a hybrid. A fraud detection system might use Stratified Review (different human tiers) + Batch Review (sample 1% monthly) + Feedback Loop Integration (retrain weekly on corrections).

The mistake is picking one pattern and rigidly sticking to it. Your system should evolve:

– Start with High-Confidence Passthrough or Progressive Autonomy (safe, simple).

– Add Stratified Review as decision types diversify.

– Add Batch Review if volume grows past human capacity.

– Integrate Feedback Loop Integration once you have 6+ months of clean correction data.

Practical Implementation Checklist

Infrastructure

- [ ] Logging: Capture every decision (prediction, confidence, human override if any).

- [ ] Routing: Build a queue system that directs decisions to the right reviewer based on your pattern.

- [ ] Versioning: Track which model version made which decision. You need to trace errors back to model versions.

- [ ] Feedback capture: When a human corrects a decision, store the correction with context (what input triggered it, what was the model’s reasoning).

Operations

- [ ] SLA: Define how long a case can sit in the review queue. (24 hours? 1 hour? Depends on business impact.)

- [ ] Escalation: What happens if a reviewer is unsure? Who do they ask?

- [ ] Retraining: Schedule it. Make it somebody’s responsibility. Weekly? Monthly? When error rate exceeds a threshold?

- [ ] Monitoring: Track review queue depth, reviewer throughput, inter-rater agreement, model accuracy by decision type.

Data Quality

- [ ] Labeling standards: Write down the exact criteria reviewers should use. “Is this customer likely to churn?” needs a definition.

- [ ] Disagreement resolution: If two reviewers disagree on a case, who decides the ground truth?

- [ ] Balancing: Track the ratio of human-corrected cases to auto-executed cases in your retraining data. Correct for imbalance.

FAQ

Q: Isn’t HITL just a way to avoid solving the hard problem of fully autonomous AI?

A: Yes and no. Fully autonomous AI is the goal for some domains. But in finance, healthcare, legal, and safety-critical operations, HITL isn’t avoidance—it’s the requirement. Regulators want to know a human saw the decision. Customers want accountability. HITL is the design pattern that makes this scalable.

Q: How do I know if I’m ready to remove human review and go fully autonomous?

A: Track three metrics over 3+ months:

1. False-positive rate: What % of decisions were wrong? (Target: <0.5% for most domains.)

2. Consistency: Do different reviewers agree on edge cases? (Target: >95% inter-rater agreement.)

3. Stability: Are error rates consistent week-to-week, or spiking? (You need at least 2 months of stability before trusting it.)

If all three are green, you can graduate to <1% spot-check sampling. If any is concerning, stay at 5–10% review for another month.

Q: We have dozens of domain experts doing manual review. Isn’t that already a HITL system?

A: No. That’s manual review. HITL has AI making decisions and humans in the loop as part of the system design. The difference:

– Manual review: humans are bottlenecks you’re trying to eliminate.

– HITL: humans are part of the architecture, with explicit routing logic and feedback loops.

If you want to evolve your manual review into HITL, start routing decisions to your experts based on confidence or risk, not randomly. Add feedback loops so corrections actually retrain the model.

Q: We tried HITL. Reviewers complained it was boring and slowed everything down. Can we do better?

A: Yes. You probably routed the wrong cases to humans. If you’re asking humans to review cases where they have clear guidance and little judgment needed, of course they’re bored.

Route high-stakes cases, edge cases, and cases where the model is genuinely uncertain—cases that need thinking. Automate routine, low-stakes cases completely. Make human review feel like expert judgment, not data entry.

Q: How often should we retrain the model?

A: Depends on feedback volume and cost of failures. If you’re collecting 1000 corrections/month and failures are expensive, retrain monthly. If you’re collecting 100 corrections/month and failures are recoverable, retrain quarterly. Watch your error rate. If it trends upward despite HITL, you’re not retraining enough.

Takeaway: HITL is a Systems Problem, Not Just a Technical One

Building HITL architecture is straightforward if you pick the right pattern for your domain and constraints. But operating it is the hard part. It requires:

- Clear ownership (someone manages the review SLA and feedback loop).

- Consistent data practices (labeling standards, disagreement resolution).

- Honest cost tracking (is this cheaper than full automation? You need to know).

- Willingness to iterate (your first pattern won’t be right; expect to evolve it).

Most teams fail at HITL because they build the technical system but skip the operational part. They ship the routing logic, the confidence thresholds, the feedback capture. Then they don’t staff it, don’t monitor it, don’t retrain on it. The system becomes a bottleneck that nobody owns.

Start small. Pick one decision type. Build that HITL loop perfectly. Then expand.

References

-

Horvitz, E., Breese, J., Heckerman, D., & Hovel, D. (2005). “The Lumeta Project: Automated Reasoning in Service of Radiologists.” Radiology, 245(3).

→ https://www.aaai.org/Papers/ICML/2003/ICML03-018.pdf -

Microsoft Research on Selective Prediction (2019). “Learning with Rejection for Reliable Image Classification.”

→ https://arxiv.org/abs/1904.12627 -

Rogova, G., & Nimier, V. (2004). “Reliability in Information Fusion: Literature Survey.” International Conference on Information Fusion.

→ https://www.intechopen.com/chapters/17995 -

Amershi, S., Cakmak, M., Knox, W. B., & Kulesza, T. (2019). “Power to the People: The Role of Humans in Interactive Machine Learning.” AI Magazine, 35(4).

→ https://doi.org/10.1145/3290605.3300233 -

Settles, B. (2009). “Active Learning Literature Survey.” University of Wisconsin–Madison Computer Sciences Technical Report.

→ https://www.cs.cmu.edu/~bsettles/active-learning/ -

Rekatsinas, T., Krishnan, S., Li, Z., Wu, J., Muthiah, S., & Ré, C. (2017). “Holoclean: Discovering and Fixing Errors in Noisy Data.” Proceedings of ACM SIGMOD.

→ https://arxiv.org/abs/1706.06368 -

Bansal, G., Bansal, T., Wu, T., Zhu, J., Marcus, A., & Weld, D. S. (2019). “Does the Whole Exceed its Parts? The Effect of AI Explanations on Complementary Team Performance.” Proceedings of CHI.

→ https://doi.org/10.1145/3290605.3300234 -

Ghai, B., Martinez, M., & Eytan, Z. (2021). “The Role of Task Difficulty and Expertise in Reliance on AI-Assisted Decision Making.” Proceedings of CHI.

→ https://arxiv.org/abs/2108.09889 -

Fails, J. A., & Demiröz, G. (2005). “Interactive Machine Learning.” International Conference on Intelligent User Interfaces.

→ https://www.cs.cmu.edu/~bsettles/active-learning/ -

Amershi, S., Cakmak, M., Knox, W. B., & Kulesza, T. (2018). “Teaching Agents how to Map Observations to Actions.” Proceedings of IUI.

→ https://arxiv.org/abs/1707.06624

Aeologic CTA

Building reliable AI systems requires architecture, not hope. Whether you’re starting your HITL implementation or trying to fix a broken one, the bottleneck is usually operational—ownership, feedback loops, and consistent practices.

AINinza is powered by Aeologic Technologies, a boutique AI consulting firm that specializes in enterprise AI architecture, implementation, and scaling. We’ve built HITL systems across finance, supply chain, and operations. We know where teams get stuck, and we know how to unblock them.

If you’re wrestling with how to structure human review for your AI system, or if you have a HITL system that’s becoming a bottleneck, schedule a strategy call with our team. We can audit your setup, identify the operational gaps, and help you scale without breaking.

→ Learn more at https://aeologic.com/