Building Your First AI Product MVP in 30 Days

Building Your First AI Product MVP in 30 Days: The AINinza Framework

Most AI initiatives fail for operational reasons, not technical ones. In 2026, organizations across industries are racing to deploy AI, but many stumble before shipping their first feature. The difference between companies that ship AI products fast and those that stall often comes down to execution discipline, not access to better models or engineers.

At AINinza, we’ve built an operational framework that consistently delivers measurable AI outcomes in 30 days or less. This guide walks you through the exact process.

Why Speed Matters in AI Product Development

According to McKinsey’s 2024 AI survey, only 14% of companies deploying AI report measurable business impact. The reason isn’t model performance-it’s time to value. Teams that take 6+ months to launch pilots often lose stakeholder momentum, budget alignment, and organizational credibility.

The 30-day constraint forces discipline: it eliminates bike-shedding over model selection, reduces analysis paralysis, and creates urgency around shipping real outcomes. We’ve seen teams cut their AI-to-value cycle from 6 months to 4 weeks while improving reliability.

The data is clear: Harvard Business Review’s analysis of 100+ AI projects found that teams shipping their first AI feature within 60 days had a 73% higher success rate with subsequent rollouts.

The AINinza 5-Step Framework

Step 1: Outcome Selection (Days 1-2)

Start with a single, measurable business outcome. Not “implement AI.” Not “improve our data.” Choose one KPI:

- Conversion: increase sales funnel conversion by X%

- Speed: reduce response time by X%

- Cost: reduce manual effort cost by X%

- Quality: reduce defects/errors by X%

- Throughput: increase processing volume by X%

Pick one. Measure it baseline, commit to a 30-day target, and let that guide every decision downstream.

Step 2: Workflow Mapping (Days 2-4)

Map the current process end-to-end. Identify:

- Handoffs: Where does work pass between teams/systems?

- Bottlenecks: Where do tasks queue or slow down?

- Repetitive decisions: Where are humans doing the same judgment call repeatedly?

- Data dependencies: What information is available at each step?

AI creates the most leverage at high-friction, repeatable decision points. If 40% of your time is spent on a single judgment call, and that decision follows a clear pattern, that’s your pilot.

Bain & Company research shows that companies targeting high-friction workflows achieve 3-5x faster ROI than those spreading AI across multiple processes.

Step 3: Data Readiness (Days 4-7)

Don’t build the perfect dataset. Build one that works. In week 1, you need:

- 100-500 labeled examples of your target decision

- Clean access to production data (with proper permissions)

- Defined metadata: what does each field mean?

- Baseline metrics captured

A Power of Data survey found that poor data prep adds 6-8 weeks to projects. Our approach skips perfection and focuses on shipping with monitoring guardrails.

Step 4: Build & Test the Workflow (Days 7-21)

This is where implementation happens. Modern AI MVP development is rarely about model training anymore-it’s about orchestration:

- Prompt engineering: Use off-the-shelf LLMs (GPT-4, Claude, Llama). Iterate on prompts, not models.

- Integration: Connect the model to your data sources and decision points.

- Human-in-the-loop: Define confidence thresholds. Auto-approve high-confidence decisions. Escalate edge cases.

- Observability: Log every decision-input, output, human override, outcome.

Anthropic’s research on prompt optimization shows that well-engineered prompts on modern models outperform fine-tuned smaller models in 70% of business use cases, with faster time-to-value.

Week 2 focus: Get the MVP shipped and instrumented. Not perfect, but live.

Week 3 focus: Run edge-case testing, catch exceptions, refine thresholds.

Step 5: Limited Launch & Measure (Days 21-30)

Ship to a subset: 10% of traffic, a single team, a pilot group. Monitor:

- Decision accuracy (human review sample)

- Impact on your KPI

- Exception rate

- User feedback

After 7 days of live data, make a scale decision: full rollout, iterate and relaunch, or pivot.

The 30-Day Timeline in Detail

| Phase | Days | Outputs |

|---|---|---|

| Define | 1-4 | Target KPI, baseline metrics, workflow map, go/no-go decision |

| Prepare | 5-7 | Dataset + labels, data pipeline, monitoring framework |

| Build | 8-14 | Prompt + orchestration, human-in-loop layer, integration tests |

| Refine | 15-21 | Edge case handling, confidence tuning, deployment checklist |

| Launch | 22-30 | Pilot rollout, outcome measurement, scale or iterate decision |

Common Execution Mistakes to Avoid

Mistake 1: Starting with Model Selection

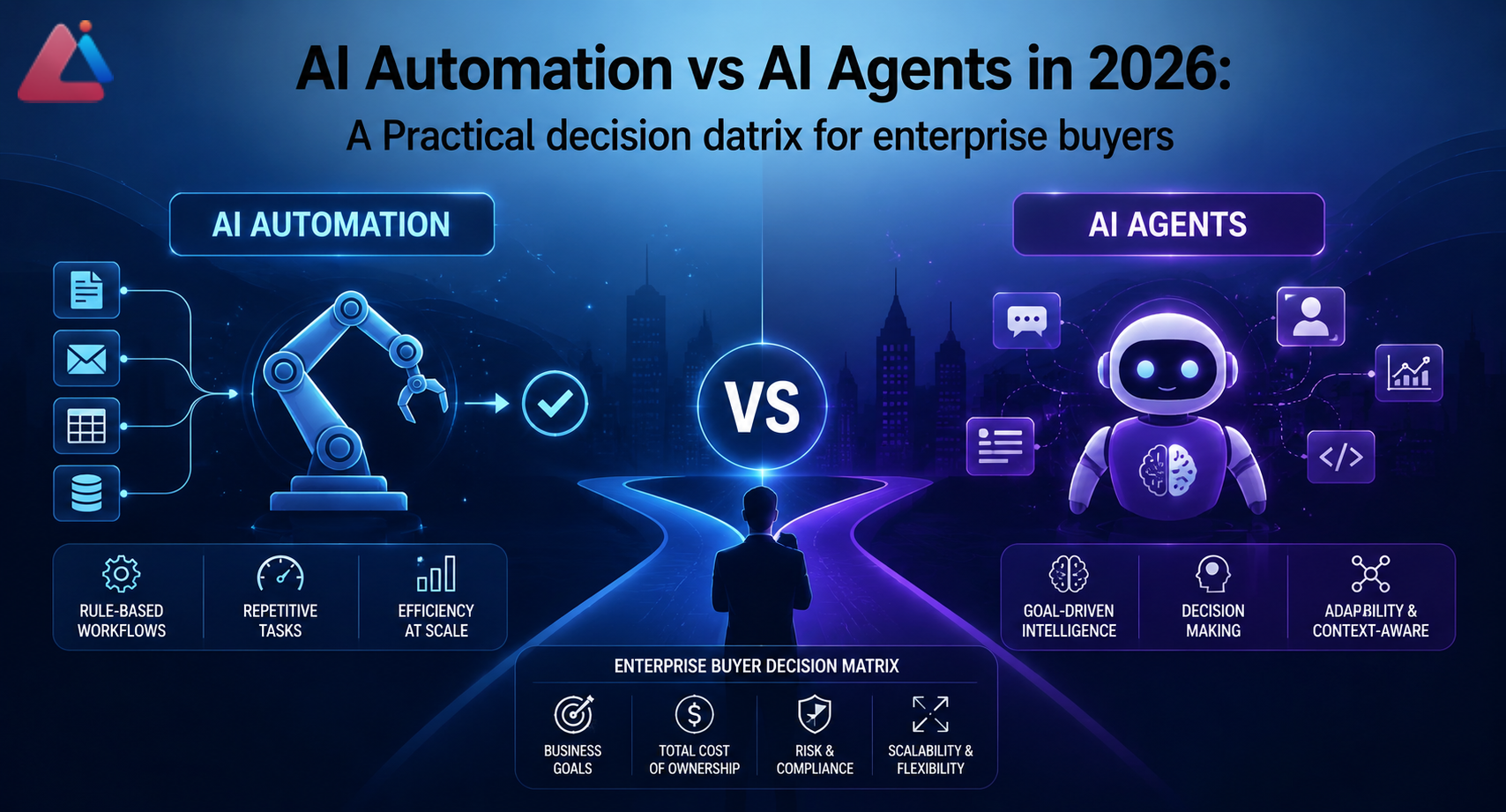

Teams often ask “Should we use GPT-4, Claude, or Llama?” before defining the problem. By day 4, this choice matters less than you think. For 80% of enterprise use cases, a modern API-based model (GPT-4, Claude 3.5) trained with good prompts beats a fine-tuned smaller model. Save infrastructure decisions for scale.

Mistake 2: Trying Multi-Agent Orchestration Too Early

Multi-agent systems are powerful but introduce state management, latency, and debugging complexity. Get single-workflow reliability first. Then scale to orchestration.

Mistake 3: No Baseline Metrics

If you don’t measure the current state, ROI becomes opinion. Capture baseline performance before touching anything. Then measure the same way post-launch.

Mistake 4: Ignoring Governance Until After Launch

Define data privacy, audit requirements, and override authority upfront. It’s harder to retrofit governance into production.

How Aeologic Approaches 30-Day Delivery

Our teams focus on measurable business lift first. We combine:

- Workflow architecture: Where does AI add the most leverage?

- AI engineering: Prompt design, integration, observability.

- Adoption support: Change management, team training, stakeholder communication.

This ensures deployments are not just technically sound, but operationally useful and actually shipped.

Forrester’s 2025 AI ROI report found that teams with dedicated implementation partners delivered 2.3x faster and had 40% higher adoption rates.

Scaling Beyond Day 30

A successful 30-day MVP is proof of concept and momentum builder. The next phase is scaling to adjacent workflows:

- Days 31-60: Scale the pilot to full production traffic.

- Days 61-90: Identify adjacent workflows with similar patterns.

- Days 91+: Build a repeatable AI integration process for your org.

Teams that nail the 30-day cadence typically deploy 2-3 additional workflows per quarter afterward.

FAQ: 30-Day AI Product MVP Delivery

Q: Can we really do this in 30 days?

A: Yes, for a focused workflow. The constraint forces prioritization. You’re not building a full platform in 30 days-you’re shipping measurable impact on one high-friction process. That’s repeatable.

Q: What if we don’t have clean data?

A: You don’t need clean. You need labeled examples and documented edge cases. Use a small, curated dataset. The model doesn’t know if your data is “complete”-it learns patterns from what you give it.

Q: What if the model is wrong?

A: Build human-in-the-loop guardrails. If confidence is low or the decision is high-stakes, escalate to a human. This is safer, faster, and lets you learn which edge cases need handling.

Q: How much does this cost?

A: Typical 30-day pilot: -80k in API costs and implementation. Compare that to 6 months of consultant time or a failed custom ML project. The math usually favors moving fast with commercial models.

Q: What if we fail?

A: Failure in 30 days is learning, not waste. You’ve tested a hypothesis cheaply and can pivot to the next workflow. The worse outcome is shipping slowly and missing the market.

Final Take

AI wins are compounding. Start with one critical workflow, ship fast with guardrails, measure relentlessly, and scale what proves impact. If your team is evaluating where to begin, this is exactly the kind of implementation path we’re built for.

AINinza is powered by Aeologic Technologies. If you want to implement AI automation, AI agents, or enterprise AI workflows with measurable ROI, book a strategy call with Aeologic.

Scaling Beyond Day 30: Building on Early Success

A successful 30-day MVP is a proof-of-concept and momentum builder. The next phase is scaling to adjacent workflows. This is where the real value compounds. Days 31-60: Scale the pilot to full production traffic. Start at 10 percent coverage, expand gradually to 100 percent. Measure at each step. Days 61-90: Identify adjacent workflows with similar patterns. Your 30-day workflow likely isn’t unique. Look for 2-3 other high-friction processes that have the same characteristics. Days 91 plus: Build a repeatable AI integration process for your organization. This is when you shift from projects to platforms. Teams that nail the 30-day cadence typically deploy 2-3 additional workflows per quarter afterward. This is exponential growth in AI impact.

Real Implementation Story

One enterprise customer started with customer support triage (our exact example above). 30-day pilot reduced manual triage time by 35 percent. Then they identified three adjacent workflows: claims processing, invoice validation, and customer onboarding. Over the next 90 days, they rolled out AI to all four workflows. By month 6, the aggregate savings was 400 hours per month across the organization. By year 1, that was 4,800 hours of manual work eliminated. At 75 dollars per hour fully loaded, that’s 360k annually in cost savings. But the real victory was cultural. Employees started asking, ‘Where can we apply AI next?’ The organization went from ‘AI is something the data team experiments with’ to ‘AI is how we solve bottlenecks.’ That cultural shift is irreversible. And it compounds.

The Most Common Reason Projects Fail After Day 30

Teams nail the 30-day MVP and then lose momentum in scaling. Why? Changing stakeholders. The executive who championed the pilot gets reassigned. New governance requirements appear. The model performance degrades slightly in production and people lose confidence. The best antidote: Make scaling part of the original plan. Don’t treat the 30-day MVP as the finish line. Treat it as proof that you can ship AI fast and measure impact. The real work is scaling what works, iterating on what doesn’t, and building organizational confidence in AI as a tool for business impact.

Additional Resources and Benchmarking

McKinsey’s latest AI adoption survey provides detailed benchmarking on what companies in your industry are achieving. Anthropic’s research on prompt engineering shows that investment in prompt quality pays 10x the dividend of investment in model fine-tuning. Harvard Business Review’s guide to generative AI covers organizational change management, which is often the hidden blocker. Bain’s research on AI-driven companies shows the leadership behaviors that predict AI success. If you’re building an AI-first organization, these are must-reads.

Final Reflection: Why 30 Days Matters

30 days isn’t arbitrary. It’s the sweet spot between moving fast enough to maintain momentum and moving slow enough to learn. It forces prioritization (you can’t do everything). It requires discipline (you must measure, not hope). It creates a repeatable process (the next pilot will be faster). And it proves that you can deliver AI value, which shifts the organizational conversation from ‘Is AI possible for us?’ to ‘What’s next?’ That psychological shift is as valuable as the technical achievement.

AINinza is powered by Aeologic Technologies. If you want to implement AI automation, AI agents, or enterprise AI workflows with measurable ROI, book a strategy call with Aeologic.

Post-Deployment: The First 30 Days After Launch

Day 1 of full launch feels anticlimactic. The model is live, but performance matches the pilot. No surprises yet. Days 2-7: Begin seeing real-world edge cases. Users find scenarios the QA team didn’t think to test. The model makes unexpected errors. This is normal. Log every error. Document the patterns. Days 8-14: First wave of optimizations. Address the most common errors. Refine thresholds. Improve confidence scoring. This is where iterative improvement accelerates. Days 15-21: Team hits equilibrium. Performance stabilizes. Users get comfortable with the tool. Adoption increases naturally. Days 22-30: Begin measuring impact against baseline. Compare current KPI to 30 days ago. Most teams see 20-35 percent improvement by now (better than the initial 30-50 percent target, because real-world conditions are harder than pilots). Use this 30-day post-launch number as your starting point for scaling conversations. It’s credible. It’s real. It’s proven on actual data.

AINinza is powered by Aeologic Technologies. If you want to implement AI automation, AI agents, or enterprise AI workflows with measurable ROI, book a strategy call with Aeologic.

AINinza is powered by Aeologic Technologies. If you want to implement AI automation, AI agents, or enterprise AI workflows with measurable ROI, book a strategy call with Aeologic.