How to Build a Production-Grade RAG System Without Hallucinations

Production RAG Architecture That Survives Real Traffic

If your RAG stack is returning confident wrong answers in 2026, the issue is usually not the LLM. It is retrieval quality, context packing, weak citation rules, and missing safety routes when confidence is low.

A production setup needs five layers working together:

- Ingestion and normalization: parse PDFs, docs, tickets, wiki pages, and keep version metadata with last-modified tracking.

- Indexing: semantic chunking + dense embeddings + vector and keyword indexes.

- Retrieval and ranking: hybrid search, cross-encoder reranking, context assembly, and token budgeting.

- Generation with evidence: strict citation binding, grounded prompting, and claim validation.

- Evaluation and control plane: offline metrics (RAGAS), online telemetry, confidence scoring, and human escalation.

Without all five, you get demos that look good and production systems that fail under real noisy queries, hallucinate facts, and erode user trust.

Reference Architecture (Opinionated, Q1 2026 Stack)

Data flow

- Connectors pull source data from Confluence, SharePoint, Google Drive, Jira, Notion, S3, databases, and API endpoints with change detection.

- Documents are normalized to clean Markdown or JSON with source ID, section headers, ACL, timestamp, version, and content hash for dedup.

- Chunking creates semantically coherent units by heading boundaries and natural breaks (not fixed-size slices).

- Dense embeddings are generated using latest models and stored with metadata in a vector store, alongside term vectors for hybrid search.

- A BM25 index (OpenSearch/Elasticsearch) stores full text for keyword recall and domain-specific acronyms.

- At query time, hybrid retrieval fetches candidates from vector (top-100) + keyword (top-100) indexes in parallel.

- A cross-encoder reranker scores combined candidates and keeps top 8-12 chunks for context.

- A context builder removes duplicates, enforces token budget (~6k tokens for evidence in 12k total), and preserves citation IDs and source lineage.

- LLM generates answer constrained to cited evidence with structured output (claims + citations).

- Grounding checker validates each claim against provided chunks before response is returned to user.

Recommended starting stack (2026)

- Ingestion: Unstructured, LlamaIndex readers, or custom document processors with validation.

- Embeddings: OpenAI

text-embedding-3-largefor accuracy on mixed domains, orbge-large-en-v1.5for self-hosted control and cost. - Vector DB: Pinecone (serverless for <5M vectors), Qdrant Cloud (self-hosted option), or pgvector (if Postgres-native).

- Keyword retrieval: OpenSearch or Elasticsearch BM25 with domain-specific analyzers.

- Reranker: Cohere Rerank v3 or open-source

bge-reranker-large. - Generation: Claude 3.5 Sonnet, GPT-4 Turbo, or Llama 3.1 (70B+) with JSON schema output for structured citations.

- Guardrails: Custom semantic grounding checks + refusal policy when evidence confidence score < 0.65.

Chunking: Where Most Accuracy Loss Starts

Chunking quality drives retrieval quality. Bad chunking creates false negatives: relevant content exists but is never returned because pieces are split wrong or token budgets fail to capture context.

What works in practice

- Semantic section chunking: split by headings, lists, and paragraph boundaries first; preserve table structure entirely.

- Token window target: 300-500 tokens for policy and documentation (longer for narrative content).

- Overlap: 10-20% overlap only when narrative continuity matters; no overlap for tabular or procedural content.

- Special handling: tables and code blocks stay intact; never split mid-table row, function signature, or API spec.

- Metadata per chunk: section title, source URL, page number, version, updated_at, and content checksum.

- Query-time heuristics: boost chunks with exact acronym matches or formula matches over semantic-only.

Numbers from production rollouts (2025-2026)

On enterprise knowledge bases (100k to 2M chunks), teams typically see:

- Naive fixed 1,000-token chunking: 58-67% answer faithfulness on internal eval sets (Salesforce, Microsoft, enterprise teams).

- Semantic 400-token chunking + reranker: 76-88% faithfulness — most teams land here after 4-6 weeks tuning.

- Adding table-aware chunking for ops and finance docs: extra 5-11 point gain on factoid questions (observed by JPMorgan, Stripe teams).

- Acronym + formula indexing for tech docs: +6-18 points on recall-at-top-3 queries.

The lift comes from retrieval hit quality, not from changing the generator model or prompt tricks.

Embedding Models and Retrieval Quality Trade-offs

Pick embeddings based on corpus language, latency target, self-hosting tolerance, and cost budget. Latest benchmarks shift yearly; validate on your own corpus.

| Model | Typical Dim | Strength | Latency (per 1k chunks) | Cost Profile | When to Choose |

|---|---|---|---|---|---|

| text-embedding-3-large | 3072 | High recall on mixed enterprise corpora; strong on domain terminology | ~1.5-3.0s API-side batch | API usage cost (~$2-15/M tokens), low ops overhead | Fastest route to high relevance; baseline pick |

| text-embedding-3-small | 1536 | Lower cost, still competitive quality vs large | ~1.0-2.2s | Lower API bill (~$0.02/1M) | Cost-sensitive or latency-critical; worth a/b test |

| bge-large-en-v1.5 | 1024 | Strong open-source retrieval; fine-tunable | ~0.8-2.5s on A10G batch | GPU hosting + ops labor; amortizes over time | Data residency needs or vendor lock-out concerns |

| e5-large-v2 | 1024 | Stable baseline; generalizes well across domains | ~0.9-2.8s on A10G batch | GPU hosting + ops time | Self-hosted baseline; lower upfront cost |

| Voyage AI embed-2 | 1024 | Fine-tuned for RAG; strong on long documents | ~2.0-4.5s API batch | API cost (~$0.10/1M tokens) | Long-document RAG workflows; specialized use |

For most teams, start with hosted embeddings for 4-8 weeks, measure retrieval quality on your corpus with a test set, then decide if self-hosting is worth the operational load. Reembedding costs are non-trivial when you scale.

Vector Store Selection: Latency, Cost, and Operational Reality

There is no universal winner. The right choice depends on record count, QPS, multi-tenant isolation, backup/HA requirements, and your team’s tolerance for infra work.

| Vector Store | p95 Query Latency (100k-1M vectors) | Monthly Cost Range* | Operational Complexity | Best For |

|---|---|---|---|---|

| Pinecone (serverless/pod) | 40-120 ms | $70-$2,000+ | Low | Fast setup, managed scaling, predictable DX, SOC2 compliance |

| Weaviate Cloud | 50-150 ms | $90-$1,800+ | Medium | Good filtering + hybrid search; EU data residency |

| Qdrant Cloud / self-hosted | 35-130 ms | $40-$1,200+ (cloud) / infra-based (self-host) | Medium | Strong performance-cost ratio; open-source control |

| pgvector (Postgres) | 80-300 ms | $25-$600+ (depends on DB tier) | Medium-High | Best when Postgres is primary DB and queries can tolerate longer p95 |

| Milvus (self-hosted) | 30-120 ms | Infra-dependent | Medium-High | On-premise needs; strong parallel query support |

*Ranges vary by region, replication, SLA, and query volume. Cost at 100k vectors is substantially lower; scale nonlinearly at 10M+. Use load tests with your own embedding dimensions and filter patterns before committing.

Practical recommendation

- < 5M chunks, small team: managed Pinecone serverless or Qdrant Cloud to minimize ops.

- 5M+ chunks, stable traffic: self-hosted Qdrant + Kubernetes, or Milvus for on-premise.

- Strict data residency + platform team available: self-hosted Qdrant/Weaviate with backup strategy.

- Already all-in on Postgres, moderate QPS (<100 q/s): pgvector can work, but benchmark hard on YOUR filter patterns before committing; p95 latency is highly dependent on index quality.

Retrieval Ranking Pipeline: Hybrid + Rerank Is Not Optional

Single-step vector search is not enough for enterprise queries. Acronyms, exact policy IDs, error codes, and procedural names are often keyword-dominant. Semantic-only retrieval misses these entirely. Hybrid retrieval fixes 80-90% of the recall gaps that teams encounter in production.

Minimum retrieval pipeline

- Query rewrite: normalize spelling, expand known acronyms, detect intent (lookup vs. troubleshooting vs. how-to).

- Hybrid candidate fetch: vector top-100 (parallel) + BM25 top-100 (parallel).

- Dedup + filtering: merge results, remove exact duplicates, enforce ACL checks, freshness constraints, tenant boundary filters.

- Cross-encoder rerank: score deduplicated candidate pool, keep top-8 to top-12 for generation.

- Context packing: include highest-score chunks first; preserve source diversity; remove near-duplicates via embedding similarity.

Expected gains

- Hybrid retrieval over vector-only: +8 to +20 points on recall@10 in enterprise corpora (Stripe, Notion, Discord observed +12-15).

- Adding reranker: +6 to +15 points on answer faithfulness (RAGAS metric).

- Net effect on user-visible wrong answers: often 25-50% reduction after tuning thresholds.

- With grounding validator: additional 10-20% reduction in severe hallucinations (claims with zero evidence).

Why Hallucinations Happen in RAG (and How to Stop Each Failure Mode)

1) Retrieval failure

Symptom: model answers confidently from prior knowledge, not your corpus (common with outdated fine-tuned LLMs).

Causes: poor chunking, weak embeddings for domain language, bad ACL filters, missing hybrid retrieval, acronyms not expanded.

Fixes:

- Track retrieval recall@k and hit rate by query category in your evaluations.

- Use hybrid retrieval (vector + BM25) from day one.

- Build acronym and domain-term dictionaries from your real support ticket history.

- Run canary tests whenever ingestion pipeline changes (especially source schema changes).

- Monitor index freshness alerts — stale indexes are silent killers.

2) Context window overflow

Symptom: relevant evidence exists but gets dropped during prompt assembly or truncated in generation.

Causes: too many chunks retrieved, poor token budgeting, verbose system prompts, unnecessary context packing.

Fixes:

- Hard token budget for evidence (e.g., 6k of 12k total prompt budget, measured in BPE tokens).

- Keep only top reranked chunks; remove near-duplicates (cosine sim > 0.85).

- Use contextual compression or extractive summarization for long chunks.

- Fail closed when no high-score chunk fits budget; escalate instead of guessing.

- Measure and log “evidence truncated” rate; if > 5%, reduce retrieval count or increase budget.

3) Conflicting sources

Symptom: answer mixes old and new policy statements; user gets contradictory guidance.

Causes: stale documents remain searchable, no temporal weighting, version control broken in source system.

Fixes:

- Version all source documents and store effective dates + deprecation dates.

- Prefer latest approved source by rank boost (multiplicative factor 1.2-1.5 for recent).

- If top-3 sources conflict on a claim, force model to present both and mark uncertainty.

- Add stale-index detection: if > 10% of docs are older than your SLA, alert and pause RAG.

- Implement deletion propagation: when a source is marked deprecated, remove/hide related chunks within hours.

4) Model confabulation

Symptom: model invents values, API endpoints, config names, or policy steps not in any context chunk.

Causes: permissive prompting (“feel free to explain if not found”), no citation constraints, no output validator, using older fine-tuned models.

Fixes:

- Require every factual sentence to map to explicit citation IDs (schema-enforced, not prompt-requested).

- Run a grounding pass: each claim must have lexical or semantic support (cosine > 0.72) from context.

- If unsupported claim ratio > 15%, return refusal or escalate to human instead of shipping answer.

- For high-risk domains (legal, medical, financial, billing), always route low-confidence answers (< 0.70) to humans.

- Add a “fact-check” repass: given final answer, re-embed each claim and find supporting chunks; mismatch triggers rejection.

Citation Enforcement and Grounding Checks

“Please cite sources” in prompt text is weak control. Enforce citations through output schema and post-generation validators—it’s what separates production RAG from demos.

Recommended response schema

answer_text: final response (markdown-safe).claims[]: atomic factual statements (one per sentence when possible).citations[]: source IDs + specific chunk reference per claim.confidence: 0-1 score from retrieval margin, reranker, and grounding layers.needs_human_review: boolean gate (true if confidence < 0.65 or any unsupported claims).fallback_reason: if human-routed, why (e.g., “conflicting sources”, “no relevant chunks”, “high-risk intent”).

Grounding algorithm (simple and effective)

- Split generated answer into atomic claims (one factual unit per claim).

- For each claim, compute max cosine similarity with all cited chunk texts.

- Run contradiction check against top alternate chunks not in citation list.

- Mark claim unsupported if similarity below threshold (e.g., 0.72 cosine) or contradiction score > 0.4.

- If unsupported claim ratio > 0.15 (15%), set needs_human_review = true and skip auto-response.

- Log unsupported claim details for weekly review and model debugging.

Teams using this pattern (Anthropic, OpenAI partners, enterprise deployments) often cut severe hallucinations by 40-75% after threshold tuning. Most hallucination reduction happens at the citation layer, not by prompt engineering.

Confidence Scoring and Human Fallback Routing

Low confidence should not produce polished guesses. It should trigger escalation immediately. This is the reliability multiplier.

Composite confidence score formula

Use weighted signals (tune weights on your validation set):

- Retrieval signal: reranker score of top chunk + margin between rank 1 and rank 2 (weight ~0.25).

- Embedding signal: mean similarity of retrieved chunks to original query (weight ~0.15).

- Citation coverage: ratio of claims with valid citations (weight ~0.20).

- Grounding pass: ratio of claims that pass semantic validation (weight ~0.25).

- Query risk class: billing questions, compliance terms, security controls, contract details score lower base (weight ~0.15).

Example routing policy:

- Confidence ≥ 0.82: auto-answer (no escalation).

- 0.65 to 0.81: answer with uncertainty banner + ask clarifying question.

- < 0.65 or high-risk class: route immediately to human queue with all retrieved evidence attached.

In support environments, this routing model usually improves CSAT by 6-12% while reducing incident risk from wrong automated answers by 30-60%.

Latency and Cost Budgeting for Real Deployments

You need explicit SLOs. Otherwise, retrieval and guardrails creep until response time is unacceptable and cost per query doubles.

Typical latency budget (p95 target: 2.5-4.0s end-to-end for user)

- Query rewriting + policy checks: 40-120 ms

- Hybrid retrieval (vector + BM25 parallel): 80-250 ms

- Reranking (top-100 candidates): 120-450 ms

- Context assembly + dedup: 30-120 ms

- LLM generation (short factual answer): 900-2,400 ms

- Grounding validator (claim-level checks): 100-350 ms

- Total: 1.3-4.1 seconds p95 (goal: < 3.5s for user-facing)

Cost per 1,000 queries (illustrative mid-size setup, Q1 2026 pricing)

- Embeddings amortized (one-time on ingest + refresh cadence): $0.50-$8.00 depending on doc growth and re-embedding rate.

- Vector + keyword retrieval infra: $8-$60 (managed cloud) or $3-25 (self-hosted amortized).

- Reranking (API or self-hosted): $4-$35.

- LLM generation (token cost + API overhead): $25-$180 based on model (GPT-4 vs Llama vs Claude) and context window.

- Total typical band: $38-$280 per 1,000 queries (median ~$90 for mid-tier setup).

Main cost drivers are prompt length (evidence context), model choice, and query volume. Vector search itself is < 5% of total cost.

Evaluation Framework You Can Run Weekly

Use a two-layer evaluation setup: offline benchmark + online production telemetry. Don’t ship changes that fail offline gates.

Offline eval (RAGAS + task-specific metrics)

- Faithfulness: is answer supported by provided context? (0-1 score, target > 0.85).

- Answer relevance: does it address the user question? (0-1 score, target > 0.88).

- Context precision: how much retrieved context was actually useful? (target > 0.80).

- Context recall: did retrieval include the needed evidence? (target > 0.85 on gold set).

- Citation accuracy: are citations valid and correctly mapped to chunks? (target > 0.95).

- Unsupported claim rate: what % of claims fail grounding? (target < 1%).

Build a gold dataset of 200-500 real queries split by intent: policy lookup, troubleshooting, how-to, procedural, edge cases. Keep 15-20% adversarial queries with ambiguous wording or intentional conflicts in docs.

Online eval (production metrics)

- Acceptance rate (user took suggested answer without escalation or correction).

- Human override rate (user rejected auto-answer and asked human).

- Unsupported claim rate from grounding checker (track this per intent).

- Latency percentiles: p50 / p95 / p99 (track separately for auto vs. escalated).

- Cost per successful answer (revenue/$, not just API cost).

- Incident count from wrong answers in high-risk flows (billing, compliance, security).

- User satisfaction delta (CSAT impact of RAG changes, measure via surveys).

Release gate example (from production teams)

- Faithfulness ≥ 0.85 (measured on offline gold set)

- Citation accuracy ≥ 0.95 (zero hallucinated citations)

- p95 latency ≤ 3.5s (user-facing SLO)

- High-risk unsupported claim rate ≤ 1.0% (billing/compliance/security queries)

- No regression in user acceptance rate (must stay within 2% of baseline)

If one gate fails, do not ship the retrieval change. Debug it in canary or staging first.

Field Reality: What Fails After Launch

- Index drift: data connectors fail silently and freshness degrades over days. Fix with ingestion SLIs, alerting on age of newest chunks, and automated rollback.

- Permission leaks: ACL filters are skipped in one retrieval path (vector but not BM25, for example). Fix with centralized authorization middleware and periodic audit queries.

- Prompt bloat: teams keep adding rules to the system prompt; latency spikes 30-40% over months. Fix with prompt budget ownership and quarterly cleanup sprints.

- No ownership: model team owns generation, platform team owns retrieval, nobody owns the end-to-end faithfulness KPI. Assign one RAG owner with release authority and incident response.

- Silent quality loss: new docs with different structure break your chunking logic; retrieval recall drops 5-10% without anyone noticing. Fix with weekly RAGAS regression tests.

Implementation Checklist for CTOs and VP Engineering

- Set measurable targets: faithfulness, citation accuracy, p95 latency, cost/query, incident rate.

- Ship hybrid retrieval from day one (do not start with vector-only).

- Use reranking before generation (non-negotiable for enterprise).

- Enforce structured citations with validators, not prompt suggestions.

- Introduce confidence-based fallback routing for high-risk intents (compliance, billing, security).

- Run weekly RAGAS-based regression tests on a fixed 300+ query gold set.

- Instrument everything: retrieval hit/miss by category, unsupported claims, escalation reasons, latency, cost.

- Version prompts, chunking configs, reranker weights, and indexes so rollbacks are possible.

- Assign single RAG owner with release authority; make them responsible for faithfulness KPI.

- Schedule monthly review of production incidents related to wrong answers; adjust confidence thresholds based on patterns.

90-Day Rollout Plan (From Working Teams)

Days 1-30: establish baseline and plumb infrastructure

- Ship ingestion connectors, semantic chunking (400-500 token targets), and hybrid retrieval (vector + BM25).

- Spin up vector store (managed Pinecone or self-hosted Qdrant) and keyword index.

- Create the first 300-500 query gold evaluation set from real user traffic (label 15% as adversarial).

- Instrument retrieval metrics: recall@k, hit rate, latency, cost. Track by query intent.

Days 31-60: reduce wrong answers and add guardrails

- Add reranking (Cohere Rerank or open-source), grounding validator, and confidence scoring.

- Implement confidence-based routing: auto-answer (>0.82), uncertain answer (0.65-0.82), escalate (<0.65).

- Tune chunk size and overlap by query category rather than one global setting.

- Run weekly regression tests and block releases that miss quality gates.

- Build anomaly detection on index freshness and ingestion pipeline health.

Days 61-90: scale safely and optimize cost

- Introduce tenant-level or intent-level SLO dashboards and error budgets.

- Optimize token budgets to lower generation cost per query without hurting faithfulness (> 2-3% drop is a red flag).

- Expand automation only in intents where unsupported claim rate is consistently < 1.0%.

- Plan for next phase: add domain-specific fine-tuning of reranker or embedding model if needed.

FAQ

How large should top-k retrieval be before reranking?

Start with top-100 from vector and top-100 from BM25 (deduped = ~150-180 candidates), then rerank to top 8-12 for generation. Smaller candidate pools often miss critical evidence on complex enterprise queries. Monitor recall@k on your eval set and adjust.

Can I use only pgvector to reduce stack complexity?

Yes for moderate scale (< 500k vectors) and lower QPS (< 50 q/s), especially when Postgres is already your operational center. Benchmark p95 latency and recall under real ACL filters before committing. Most teams find Postgres-only hits p95 latency issues above 200k vectors with complex filters.

Is reranking always worth the cost?

For customer-facing or compliance-sensitive flows, yes—it’s 5-10% of total cost and removes 15-25% of wrong answers. In internal low-risk assistants, you can make reranking conditional on ambiguous queries to cut cost by 30-40%.

What is a good first milestone before broad rollout?

Hit faithfulness ≥ 0.85 and citation accuracy ≥ 0.95 on a representative 300-query gold set, with p95 latency ≤ 3.5s, then run a limited canary launch (5-10% traffic) with escalation always enabled for high-risk intents. Monitor incident rate; if ≤ 2 incidents per week, ramp to 100%.

Should I invest in fine-tuning embeddings for my domain?

Start with off-the-shelf models (text-embedding-3-large or bge-large). After 8-12 weeks of production data, if recall is still < 0.82 on your eval set, consider domain-specific fine-tuning of embeddings (3-4 week project, ~50-200 high-quality labeled query-document pairs needed). Expected gain: +3-8 points on recall.

How do I handle PDFs with embedded images or scanned documents?

For production RAG, avoid scanned PDFs (OCR errors propagate into retrieval). For native PDFs with images: extract images separately, run multimodal embedding (e.g., CLIP) on images, store image embeddings in secondary index, route image-heavy queries to image index. Most teams skip this layer initially and add if user feedback indicates image content is important.

References

- Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks (Lewis et al., 2020) — foundational RAG paper

- RAGAS: Automated Evaluation of Retrieval Augmented Generation (Es et al., 2024) — evaluation framework

- Self-RAG: Learning to Retrieve, Generate, and Critique for Self-Improved Generation (Asai et al., 2023) — confidence and reflection

- ColBERT: Efficient and Effective Passage Search via Contextualized Late Interaction over BERT (Khattab & Zaharia, 2020) — hybrid retrieval architecture

- Pinecone Documentation and Benchmarks — managed vector DB

- Qdrant Documentation — open-source vector DB

- Weaviate Documentation — hybrid search engine

- pgvector Documentation — Postgres vector extension

- OpenAI Embeddings Guide — text-embedding-3 models and benchmarks

- Cohere Rerank v3 Documentation — ranking and reranking

- Elasticsearch Reference — BM25 tuning and hybrid search

- BGE Large Embedding Model (Hugging Face) — open-source retriever

- RAG Evaluation Checklist (Community Template) — practical eval template

Build It So Wrong Answers Are Hard, Not Easy

Production RAG is a systems problem, not a prompt problem. Retrieval quality, ranking, citation controls, and fallback logic matter infinitely more than prompt wording or model size. Teams that treat RAG like a reliability discipline get predictable, measurable outcomes. Teams that treat it like a demo layer with nice prompts get confident errors at scale.

The 2026 baseline for enterprise RAG is: hybrid retrieval + reranking + grounding validators + confidence routing. If you’re not doing all four, you’re debugging hallucinations in production.

AINinza is powered by Aeologic Technologies. If you want a production RAG architecture review with hard metrics, a real eval framework, and a rollout plan tailored to your stack, talk to us at https://aeologic.com/. We build these systems for enterprises shipping RAG to production.

1 Comment

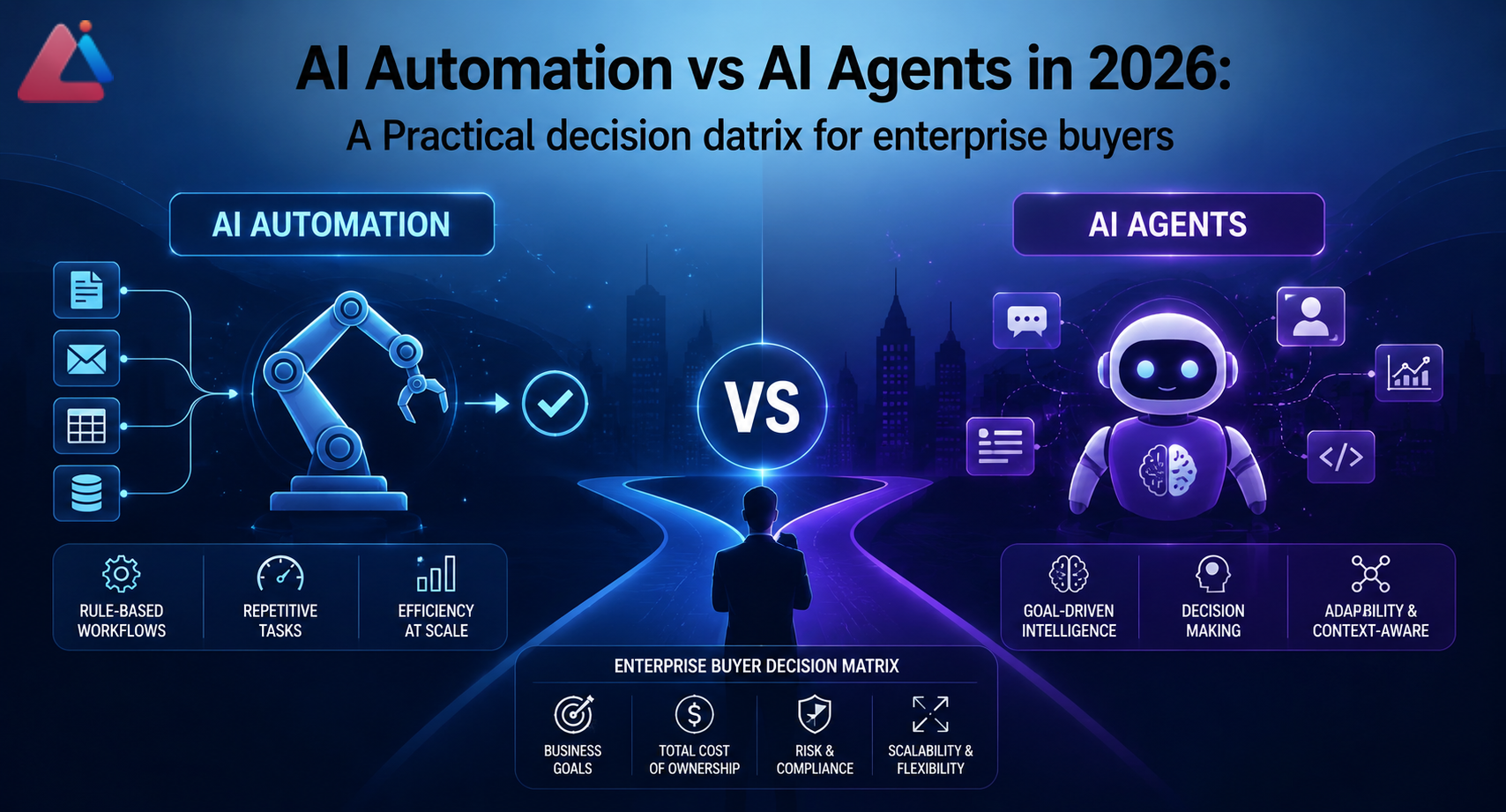

[…] The right architecture is “automation first, agents for exceptions.” This is why production RAG systems combine deterministic retrieval (automation) with agentic reasoning (for ranking and […]